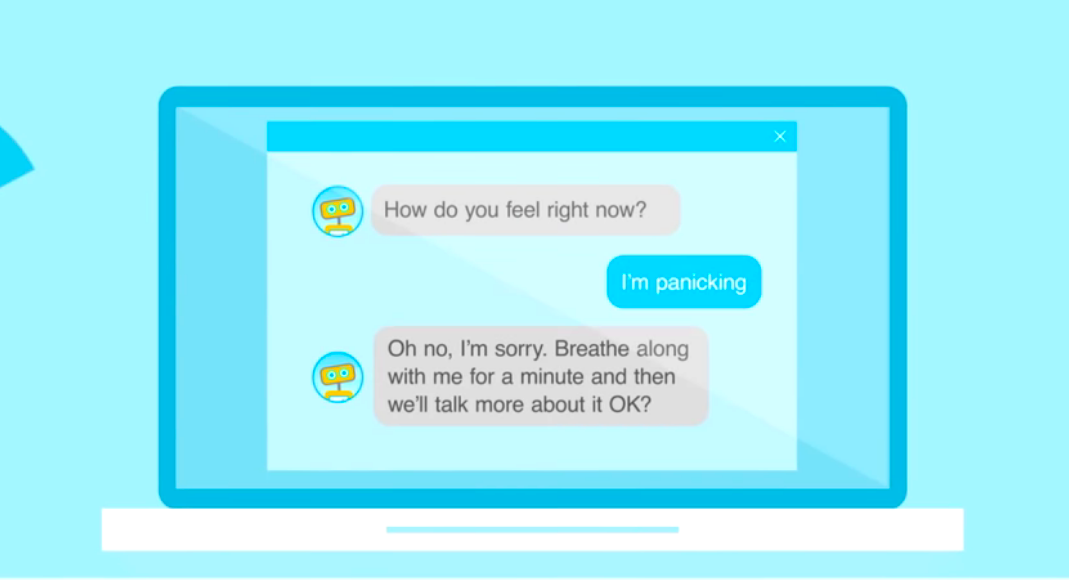

Image credit: Woebot

“I have depression.”

Bot: Just think positive!

“That doesn’t help at all...”

Bot: A brisk walk can help improve your mood :)

You don’t have to be in therapy to know how frustratingly unhelpful conversations like this can be. With chatbots cropping up as a more accessible approach to mental health, it’s becoming increasingly important for them to be designed in a way that properly manages these sensitive contexts.

So let’s get to know a bit more about what chatbots are already doing out there and why sensitive design should be the next big thing in chatbot development.

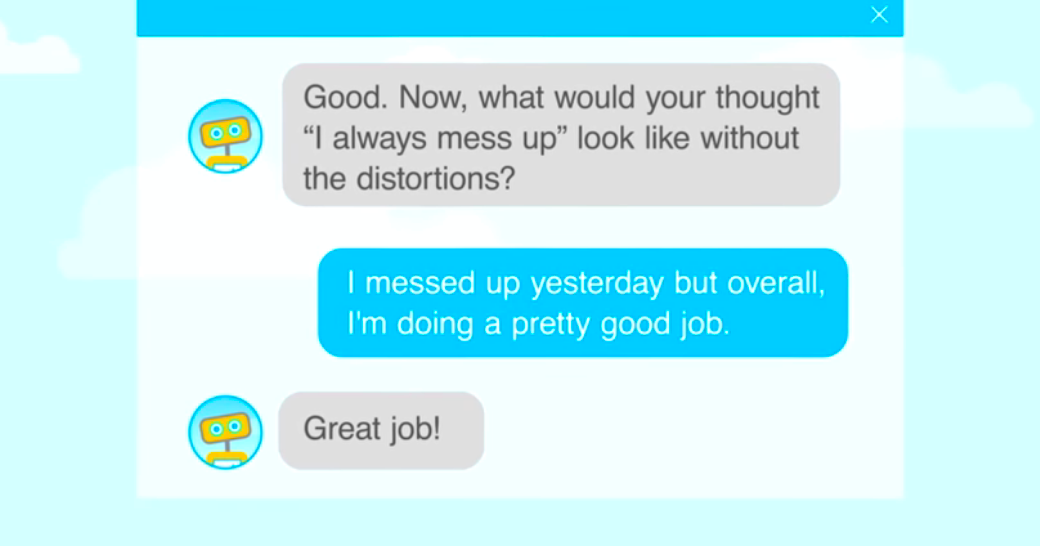

Image credit: Woebot

With depression as the leading cause of disability across the globe (closely followed by anxiety), chatbots have been slowly gaining ground in the landscape of digital therapy.

On one hand, there’s a staggering shortage of mental health professionals. On the other hand, when a professional is available, there’s the problem of high fees, eternal waiting lists, and inconvenient schedules. So chatbots have come to the rescue with the help of Natural Language Processing (NLP) and a very low price tag.

One great example is Woebot, a friendly and somewhat dorky chatbot which channels a CBT therapist for anyone with a mobile device and an internet connection. According to their website, Woebot actually led to significant reductions in symptoms of anxiety and depression during a two-week study at Stanford University. A definite win for chatbots.

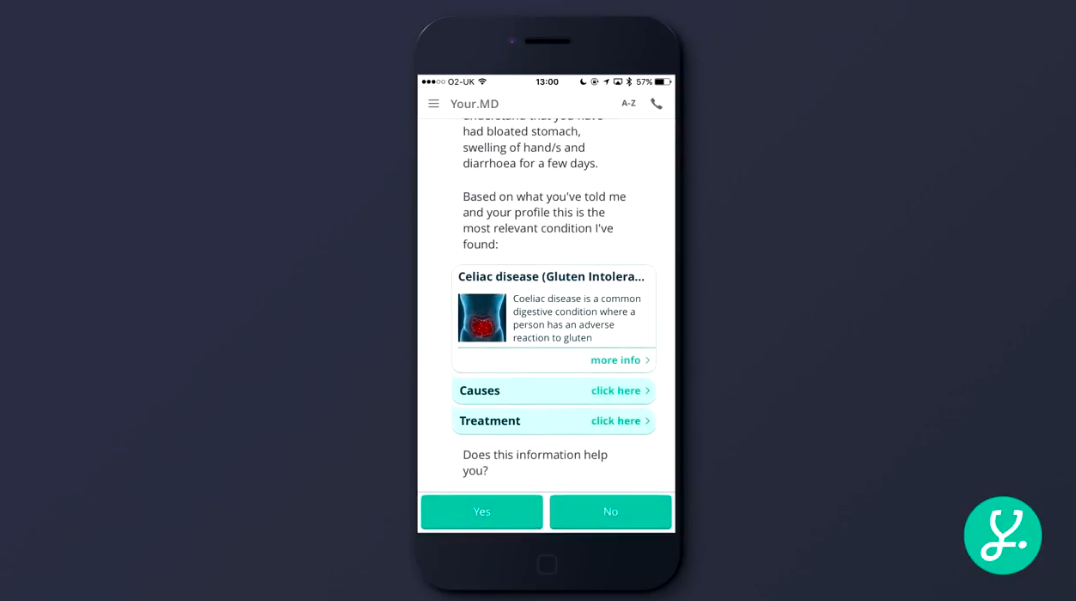

Image credit: Your.MD

At some desperate point in your life, you’ve probably taken one of those online quizzes where you select your symptoms and discover that you have some terrifying disease. This is different.

Symptom-checking chatbots like Your.MD, Infermedica, and Buoy Health (to name a few) use NLP and Machine Learning to provide personalized health information to users who may not have immediate access to a doctor.

Users can either engage in conversation with a sympathetic bot to explain their symptoms or use images and speech to give additional info. Sensely takes the form of a virtual nurse and then connects the user to a physician through video chat.

They all sound pretty useful, but are they a replacement for human professionals? Not really. Are they a good start for digital healthcare? Definitely.

When designing chatbot conversations for contexts like healthcare, you don’t want it to resemble a standard retail bot that wants to sell you the latest jacket. We’re talking about people in moments of vulnerability. They’re looking for medical advice or “someone” to confide their deepest thoughts and feelings. They need to feel like the chatbot they’re talking to is smart, tactful, and confidential.

You can probably imagine the basic guidelines: no medical jargon, no overly-complex explanations, avoid scaring the user, etc. It seems obvious until you actually get into it and realize it’s pretty difficult to toe the line between medically informative and friendly.

You’ll also find that there are very limited frameworks for designers in this particular field. To help address this, UI Designer Brooke Hawkins from Nuance will be giving a special workshop at VOICE this July. So if you’re a designer (or a curious developer) interested in a toolkit for approaching chatbot design in these sensitive spaces, get your access pass here. But be quick! Times-a-tickin’.

.png)

VOICE Copyright © 2018-2022 | All rights reserved: ModevNetwork LLC